Projects

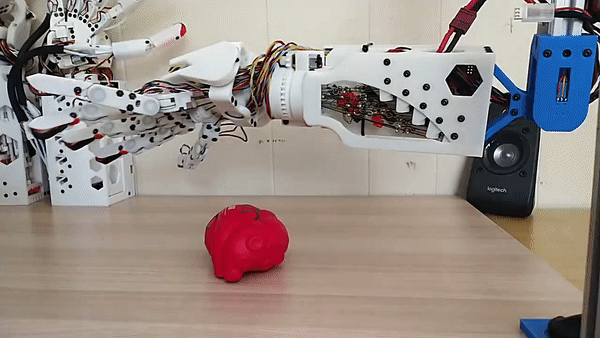

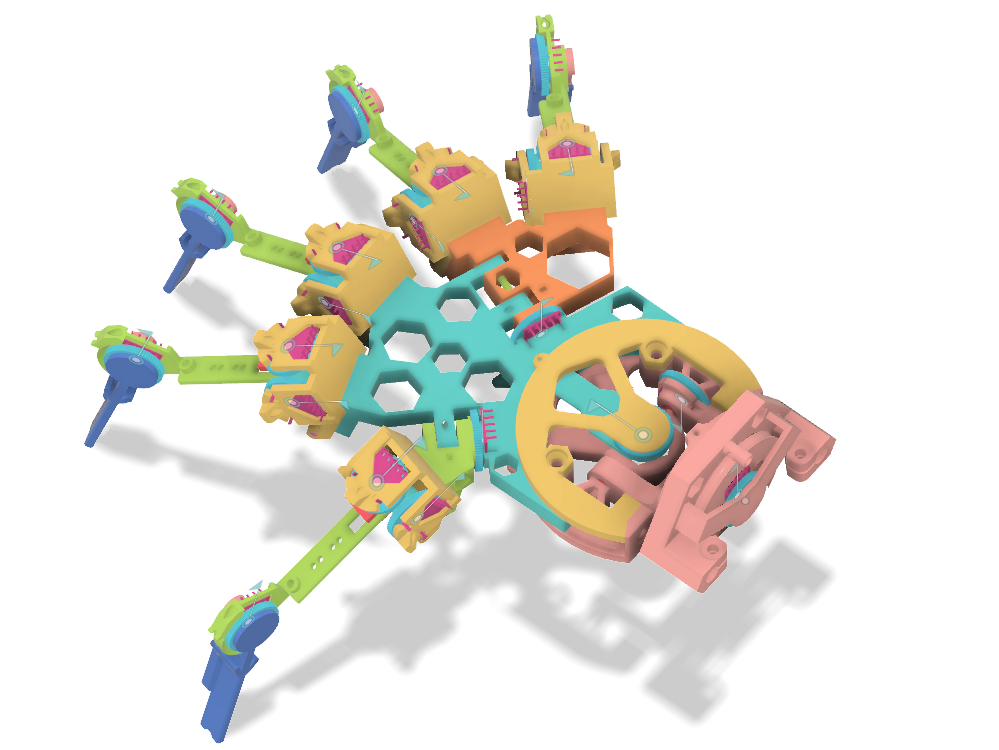

Hextech Mechahand v13

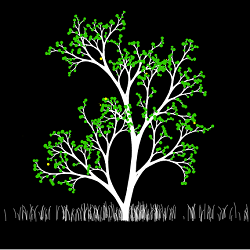

The robot hand design was iterated, now with a flexible circuit integrated into the palm and fingers powering in-place position sensors and touch/force sensing. The integrated sensors greatly simplify wiring and assembly as the flexible circuits modules plug into eachother and use a single conexion to the main driver board on the forearm. The main driver board has also dropped wires in favour of directly soldering the motor leads into the board. The tendons are carefully routed through a flexible wrist cable protector widget and channeled directly to the motor spindles in the forearm for easy assembly. The name of the game was wire management, for both electrical and tendons. The motor power and sizes have also doubled! ... and yet, they need to double once more for good usability! The hand is controlled via camera hand detection.Hextech Mechahand, Alpha Release

I designed an open source robotic hand as a low cost standard for reinforcement learning on dexterity. The hand has 20 degrees of freedom, mimicking a human range of motion. Each of the joints has position feedback and force sensing, with 6 extra pressure in the finger tips and palm. All electronics feed into a compact PCB with WiFi connectivity. The bill of materials is just under $300 amortized over 5 hands.

Hextech Mecha-hand, First Assembly

Got my first 3D printer a month ago so naturally my first project after printing Benchy is to make a 20 degree of freedom mecha-hand. Because simple beginner stuff, right?! Turns out the engineer was inside me all along, and I now have a functioning, near full human movement, mechanical arm. Well, I have the physical components assembled, but not the electronics to make usable yet. I’m working on those now!

Really Good Papers (part 1)

I’ve started a challenge to read a machine learning paper a day. Now after a few of months and a lot of catching up to do I’ve gone through a couple hundred papers.

These are my picks for the best ones I found:

3D Modelling a Joystick

The joystick from your Xbox controller is made of whopping 12 individual parts! To teach myself 3D modelling I’ve copied the design, with painstaking detail, in Fusion 360. Check my model out on thinkgyverse or this animation:

Duolingo Chinese

After 6 months of practicing Chinese every day I’ve finally finished the course on Duolingo. Now with a lexicon of 1800 Chinese characters I can proudly say I’ve reached the proficiency of a 1st grader.

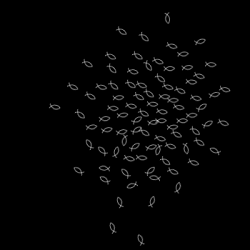

Cell Membrane Simulation

I simulated the behaviour of cell membranes for a research collaboration with my wife, Catalina Spatarelu, and her colleague Dung Nguyen. It’s written in Observable using a literate programming style; that is prose, code and visualisation intermixed. Visualisations are all done using d3 and the physics interactions are optimised using a quadtree. The goal of the project is to analyse the jamming/unjamming transition and a poster has been accepted for presentation at CMBE 2019.

It's a Stick-up (part 1)

My first project on my quest to build a walking robot using neural nets. It’s my physical build of the classic pendulum control problem. The goal is to swing a stick and keep it balanced so it stays upright. Turns out, reinforcement learning is pretty hard, so I can’t show a working version yet. The build I have so far is a Raspberry Pi connected to 4 servos through control board I programmed. There’s a string tied to the servos on which a stick is held and on the stick there’s a gyroscope. The Raspberry Pi connects through websockets to my computer and I sample the measurements and output the servo positions. Hopefully an actor-critic algorithm will control it soon, but for now, here’s me fiddling with it.

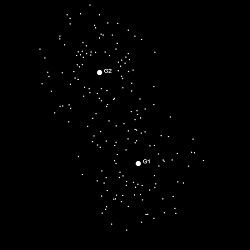

Faces GAN

For the final project of Udacity’s Deep Learning course I built a faces generator using a generative adversarial network. The gist of this approach is to build two competing neural networks, one to generate fake images and one to detect whether an image is fake or real. By training both networks in parallel we eventually end up with a generator network that produces images that look just like our dataset; and we discard the detector network. For this project we used the CelebA dataset of celebrity pictures so the network learned to generate

celebritycreepy faces.

Older Projects

Single page animations and games.

subscribe via RSS